Great outreach isn’t just about creativity – it’s about data-driven decisions. Don’t just throw a message out there and hope it sticks. A/B testing helps you uncover what actually works with your audience by comparing two variations of the same message. Even small tweaks can make a big impact on open rates, responses, and conversions.

Let’s walk through a couple of real examples, show you how to run your first A/B test and help you start generating better results – fast.

👋 Why A/B Testing Matters

Recruiters are often one great message away from a booked call – but knowing what message gets that reply? That’s where A/B testing comes in.

By experimenting with small changes – subject lines, openings, questions, or personalization – you’ll learn what really moves the needle in your outreach.

This guide gives you real examples, plus a simple way to start testing today.

Test #1: Personalization Performs

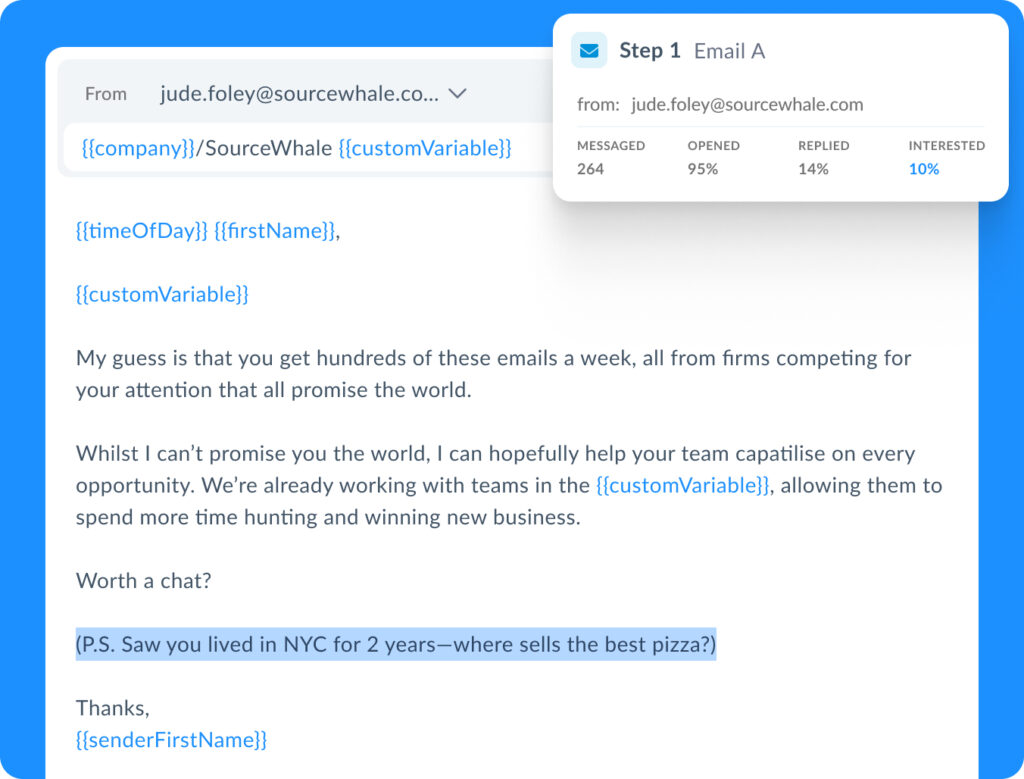

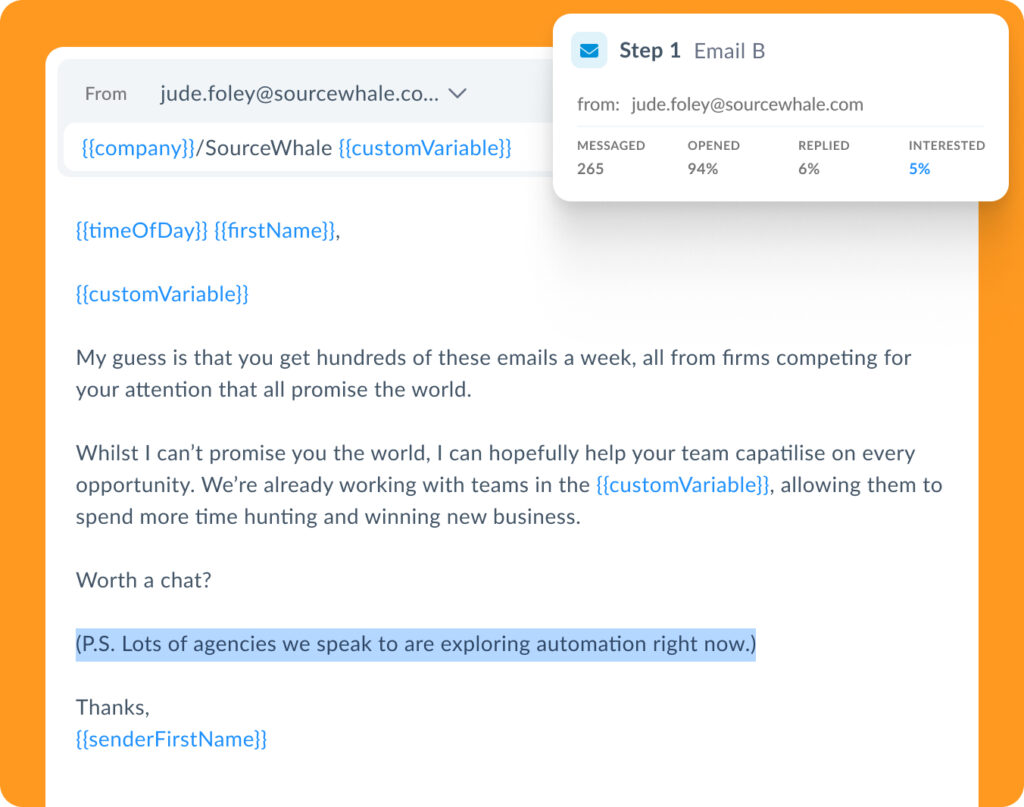

One of our SDRs here at SourceWhale wanted to know: Does real personalization move the needle?

So, they ran a simple A/B test using a P.S. line in their email outreach:

- Email A included a personalized P.S. tied to the individual:

P.S. Saw you lived in NYC for 2 years – where sells the best pizza?

- Email B included an industry-relevant but not personalized P.S:

P.S. Lots of agencies we speak to are exploring automation right now.

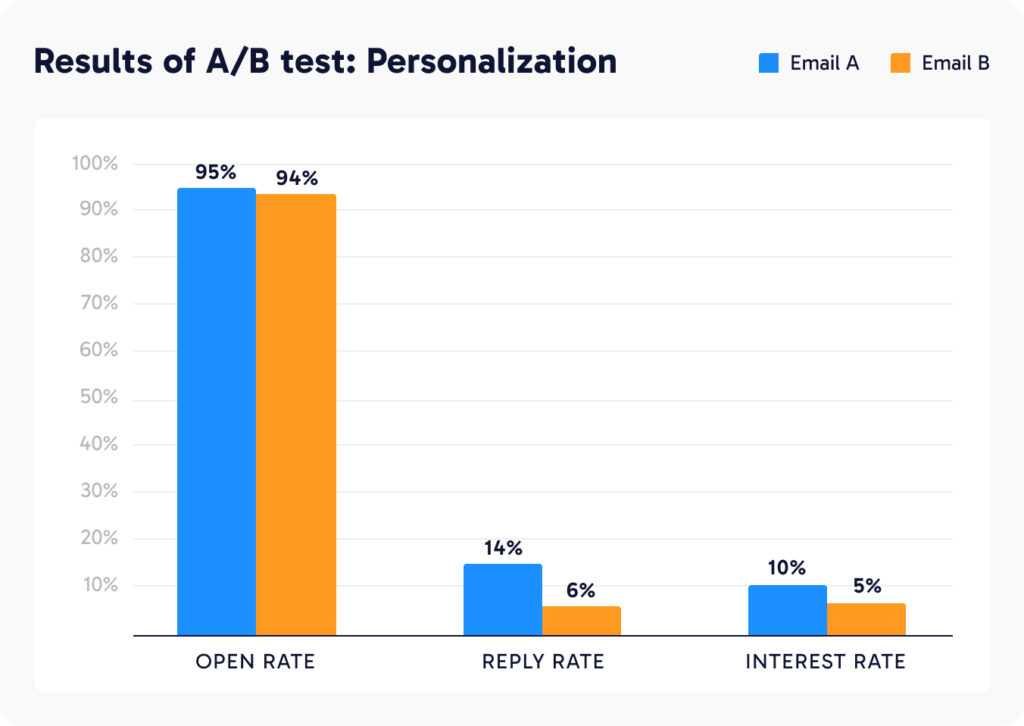

The result?

Over 100% increase in response and interested rate for Email A. Personalization is key.

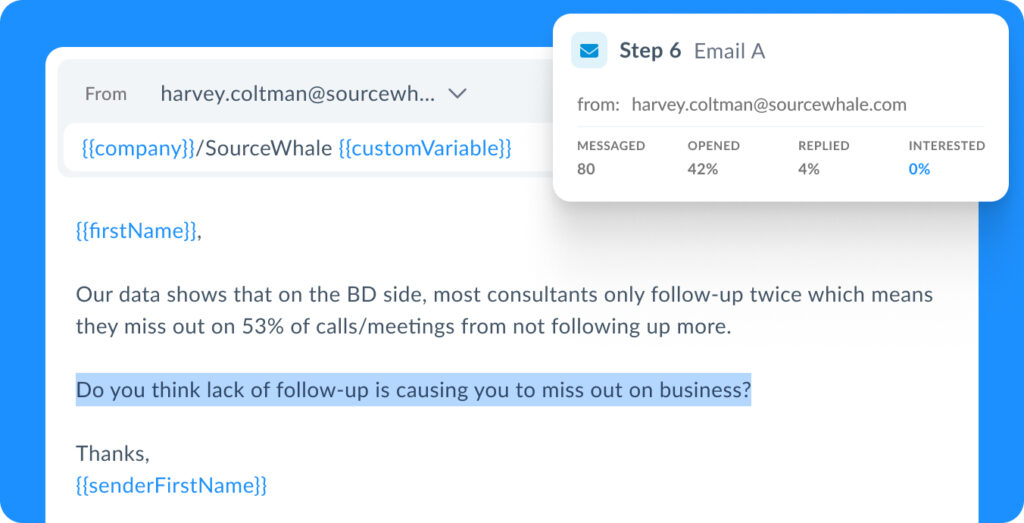

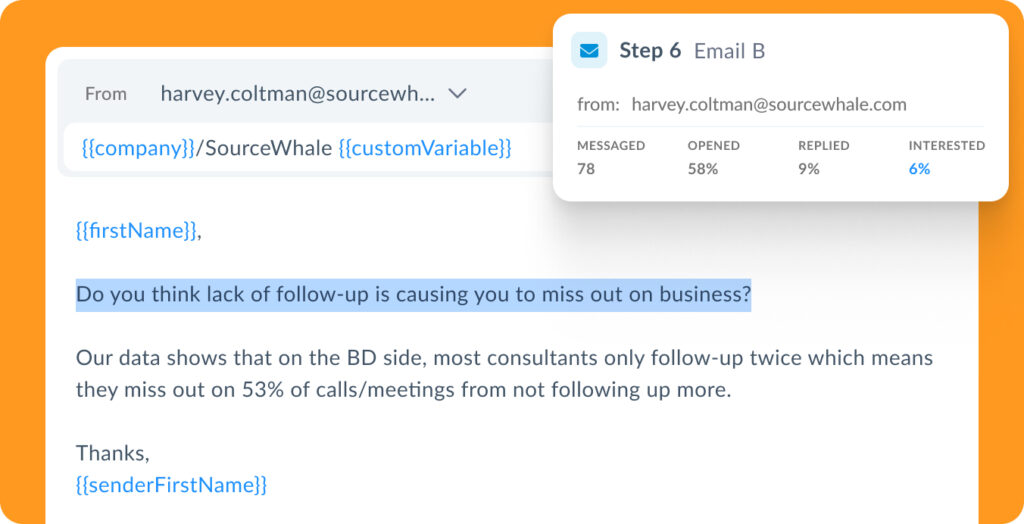

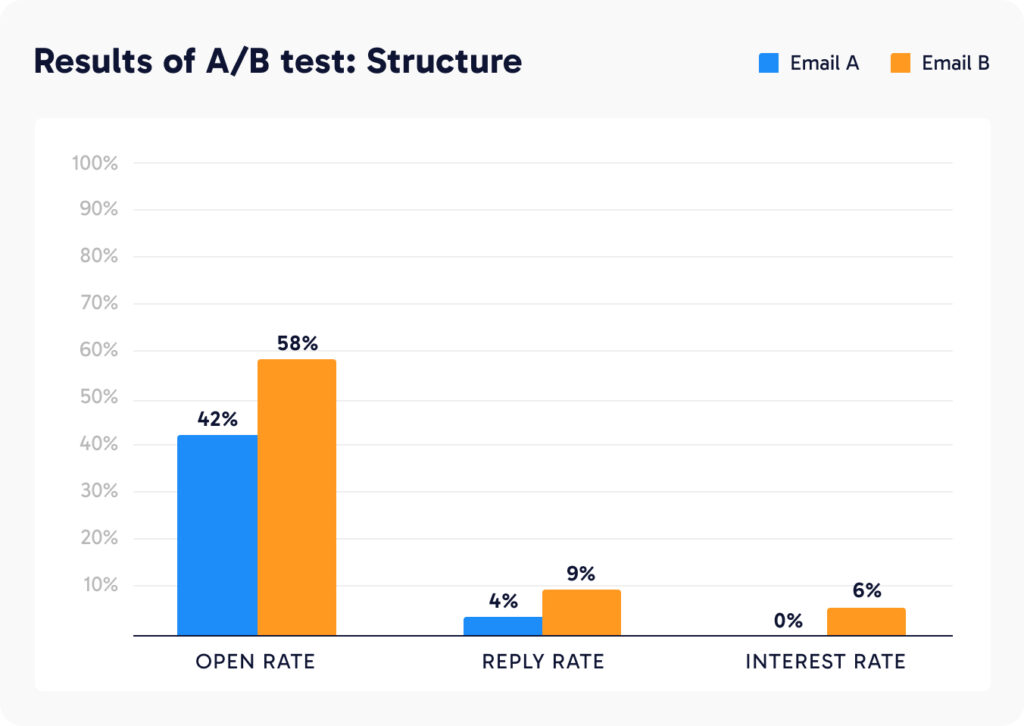

Test #2: Same Content, Different Structure

In our second example, the message content stayed the same – but the order was changed.

Email A started with a data point and then ended with a question.

- Email B reversed the order:

Led with a compelling question:

“Do you think lack of follow-up is causing you to miss out on business?”Then followed with data:

“Our data shows most consultants only follow-up twice…”

The result?

Email B (question first) dramatically outperformed email A. Leading with a question drives more engagement. Curiosity wins.

📧 How to Run Your Own A/B Test

Running your own A/B test is one of the simplest – and most effective – ways to uncover what’s resonating with your audience. Whether it’s your subject line, opening sentence, or CTA, small tweaks can lead to big results.

This quick guide walks you through how to run a lean, insightful A/B test in just a few steps – no fancy tools or massive lists required.

- Pick a Focus

Choose one element to test: subject line, opening sentence, CTA, sign-off, etc. - Segment a Small Audience

Use a group of 40–100 contacts with similar roles, industries, or seniority. - Send Two Versions

Keep everything else the same—only change one variable. - Track Metrics

Focus on: Open rate (subject lines) / Reply rate (copy) / Interested rate (overall intent).

💡A/B Test Ideas for Recruiters

Common Subject Line Tests

Across high-performing campaigns, recruiters most often test:

Generic vs personalized subject lines (use fields like {{firstName}} or {{company}})

Professional tone vs conversational tone

Short curiosity-based subjects vs descriptive, role-led subjects

“RE:” style threading vs fresh subject lines

Urgency vs low-pressure asks

👉 The goal is usually open-rate optimization first, before testing message content.

Subject Line Test Examples

1. Generic vs Specific

A: Senior Operations Manager Available

B: Thoughts on this Senior Operations Manager, {{firstName}}?

Why test this: Measures whether specificity + light personalization outperforms a straightforward role announcement.

2. Formal vs Conversational

A: Market update: UK insurance hiring trends

B: A quick heads-up on what’s happening in insurance hiring right now

Why test this: Tests professional language vs a more human, conversational tone.

3. Broad vs Contextual

A: Interim Technology Lead Available

B: Interim Technology Lead with SaaS scale-up experience – available now

Why test this: Evaluates whether adding context increases relevance and opens.

4. Direct Ask vs Soft Question

A: Compliance Manager – immediately available

B: Would it help to see a Compliance Manager CV this week?

Why test this: Compares assertive delivery with a softer, permission-based approach.

5. Industry Insight vs Hiring Angle

A: ERP hiring trends we’re seeing in 2026

B: Hiring ERP specialists in 2026 – what’s changing?

Why test this: Insight-led subject vs outcome-led subject.

6. “RE:” Threading vs Clean Subject

A: RE: Operations Leadership Support

B: Strong Operations Leadership for High-Volume Environments

Why test this: Tests familiarity and perceived continuation vs clarity and value.

7. Results-Oriented vs Credentials-Oriented

A: Plant Manager – driving productivity improvements

B: Plant Manager | Lean Six Sigma | Multi-site leadership

Why test this: Outcomes vs qualifications.

8. Short vs Descriptive

A: Can I run something past you?

B: Quick question about your engineering hiring plans

Why test this: Curiosity-driven vs clarity-driven opens.

9. Generic Urgency vs Personalized Urgency

A: Please review when you get a moment

B: {{firstName}}, could you take a quick look today?

Why test this: Personal urgency vs neutral follow-up.

Common Message Tests

Once subject lines are optimized for opens, typically the next step would be testing what drives replies. Across high-performing campaigns, the most common message-level tests include:

Conversational vs value-led opening lines

Short, skimmable messages vs more detailed narratives

Direct CTAs vs low-friction next steps

Candidate-led messaging vs problem-led messaging

Standard sign-offs vs personalized signatures

Follow-ups that nudge vs follow-ups that add value

👉 The goal at this stage is usually reply-rate optimization, not just opens.

Message Test Examples

1. Opening Line Test – Conversational vs Authority-Led

A: Saw you’re scaling your sales team – impressive growth.

B: I help Series A tech companies scale sales teams efficiently – thought I’d reach out.

Why test this: Tests whether a friendly, observational opener outperforms a more direct credibility-led introduction.

2. Opening Line Test – Personalized vs Problem-Led

A: Noticed you’re hiring for multiple engineering roles this quarter.

B: Many teams are struggling to hire engineers quickly without sacrificing quality.

Why test this: Compares personalized context against a broader pain-point hook.

3. Bullet Points vs Narrative

A: This candidate has:

7+ years leadership experience

Managed teams of 50+

Strong change management background

B: This candidate brings over 7 years of leadership experience, having managed teams of 50+ and led multiple change initiatives in fast-paced environments.

Why test this: Test short, bullet-point focused intro listing candidate credentials vs. more conversational, narrative style. This measures scannability against storytelling and which better holds attention.

4. Full Pitch vs Qualifying Question

A: Full overview of the candidate’s background, experience, availability, and CV included.

B: Quick sense check – are you actively hiring for this type of role right now?

Why test this: Tests commitment-heavy messaging against a low-friction engagement approach.

5. Call-to-Action: Direct vs Value-Led

A: Would you be open to a quick intro call?

B: Would it be useful if I shared 1–2 relevant profiles as an example?

Why test this: Compares a traditional meeting ask against a softer, value-first CTA.

6. Call-to-Action Test: Time-Based vs Outcome-Based

A: Do you have 15 minutes for a quick chat this week?

B: Could this help speed up your hiring plans for this quarter?

Why test this: Tests time commitment vs outcome-driven motivation.

7. Follow-Up Style: Nudge vs Added Value

A: Just following up on the message I sent last week.

B: Following up with a quick update on candidate availability in your market.

Why test this: Measures whether adding new context improves response rates compared to a simple reminder.

8. Candidate-Led vs Problem-Led Messaging

A: I’m currently working with a strong Operations Manager who’s open to new roles.

B: Many operations teams are under pressure to improve output without increasing headcount.

Why test this: Tests interest driven by a specific candidate vs interest driven by a broader challenge.

9. Signature Personalization Test

A: Standard text sign-off (Name, title, company)

B: Sign-off including:

Calendar booking link

Headshot or personal touch

Optional soft CTA (“Happy to share more if useful”)

Why test this: Evaluates whether humanizing the sender and reducing friction increases replies.

🧠 Pro Tips for Testing Success

- Only test one variable at a time so you know what made the difference.

- Use a sequencing tool (like SourceWhale) to A/B test at scale and track results automatically.

- Repeat and refine: What works for one audience may flop with another – keep testing.

- Document your learnings to build a playbook for what performs best in your niche.

📝 Try It Yourself

Pick 50 leads and test something new – whether it’s how you open, close, or personalize your message. You might double your responses like our SDR did.

A/B testing isn’t just for marketers. It’s for every recruiter who wants to stand out, cut through the noise, and book more meetings.